- Implemented an AI agent using LangChain and RAG to seamlessly process and personalize user interactions from unstructured data.

- Applied generative AI methods to deliver highly adaptive, contextual, and tailored user experiences, significantly boosting engagement.

|

- Developed an AI-powered tool that generates concise summaries and engaging highlight reels from sports press conference videos, enhancing fan and media experience.

- Integrated advanced NLP and video analysis models, including GPT-4 and Pegasus1 (from Twelve Labs), to automate post-game recaps, interview snippets, and social media content creation.

- 🏆 Secured second place at "Twelve Labs: Multimodal AI Media & Entertainment" Hackathon

|

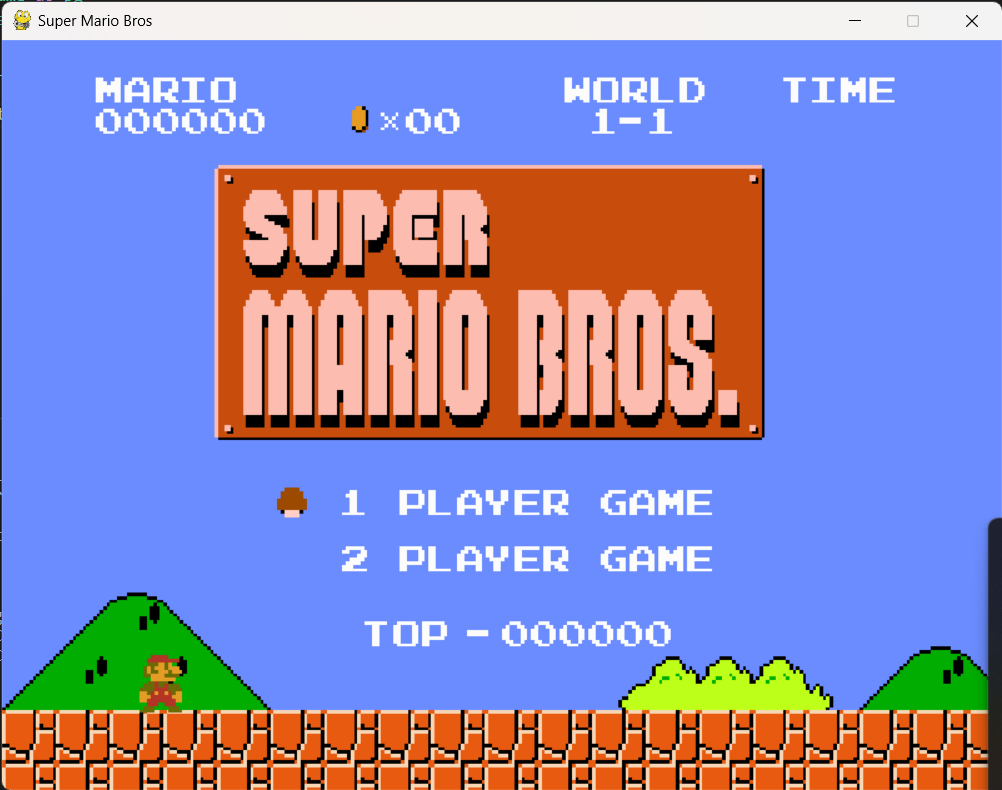

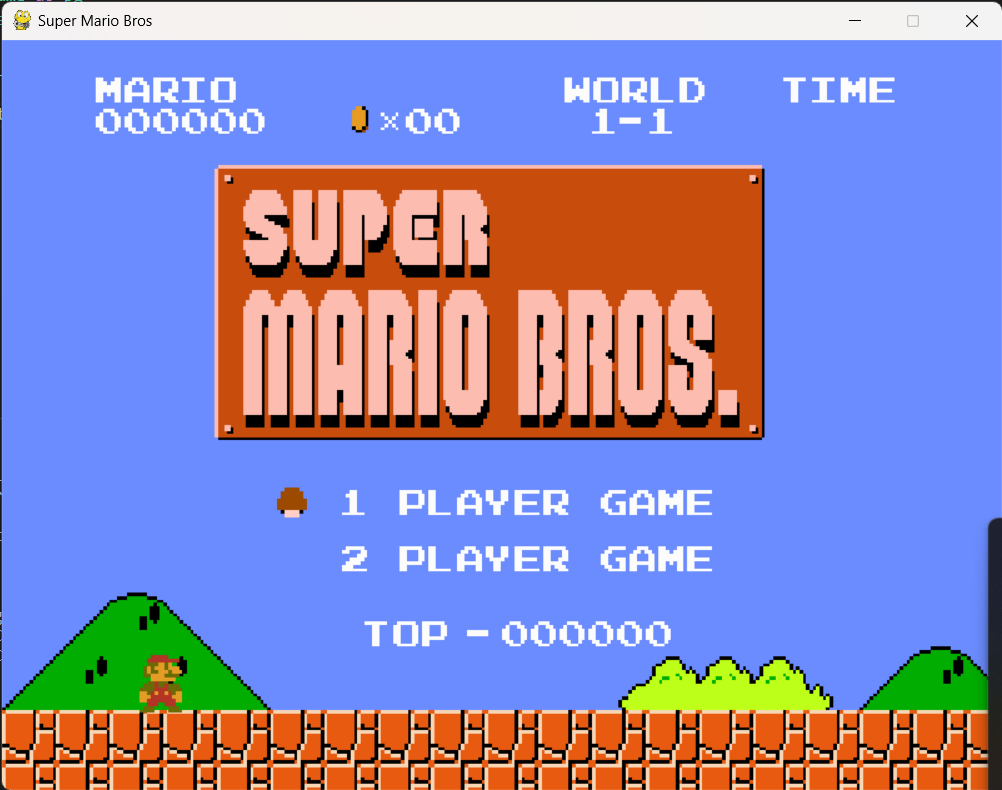

- Utilized the Proximal Policy Optimization (PPO) algorithm to enhance gameplay strategies in "Super Mario Bros," leveraging the OpenAI Gym environment for robust and dynamic interaction.

- Employed frame extraction and grayscale conversion, integrating a history of the previous four frames with the current one for improved performance and analysis, with the agent designed to run for an extensive one million iterations.

|

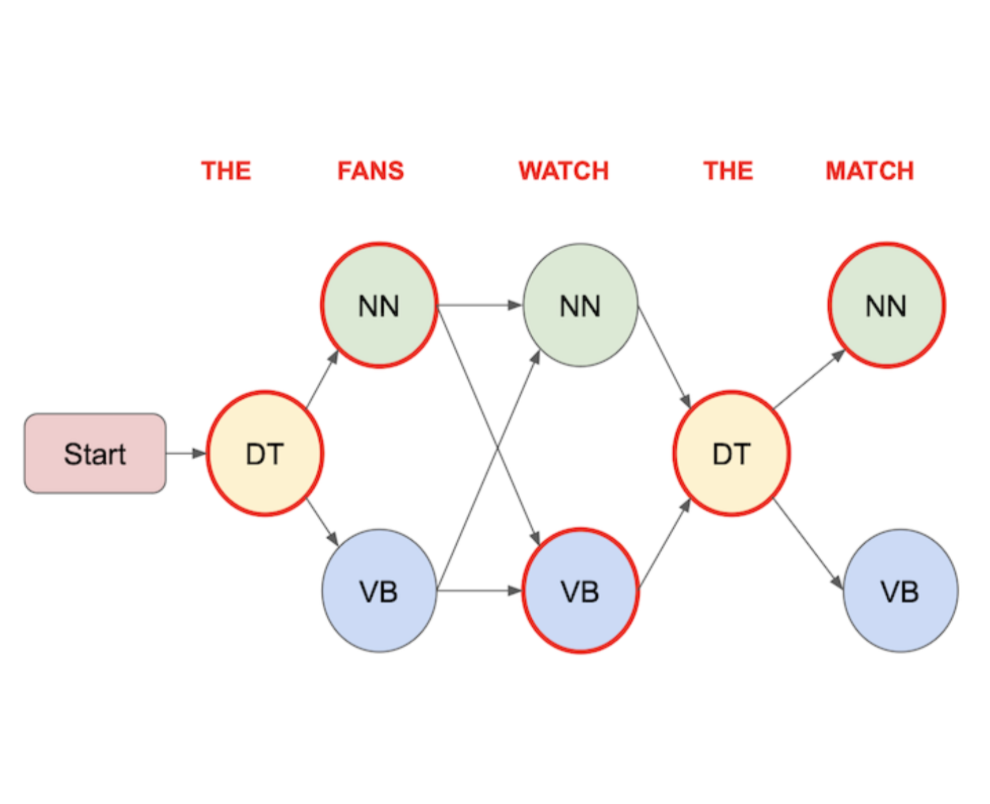

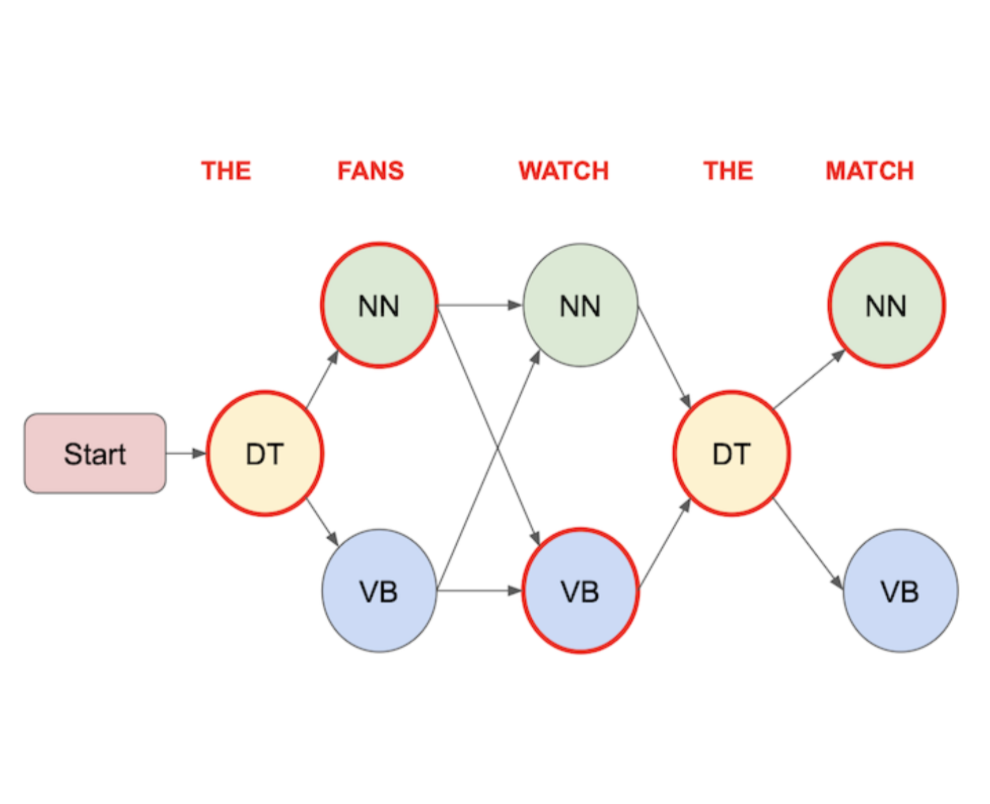

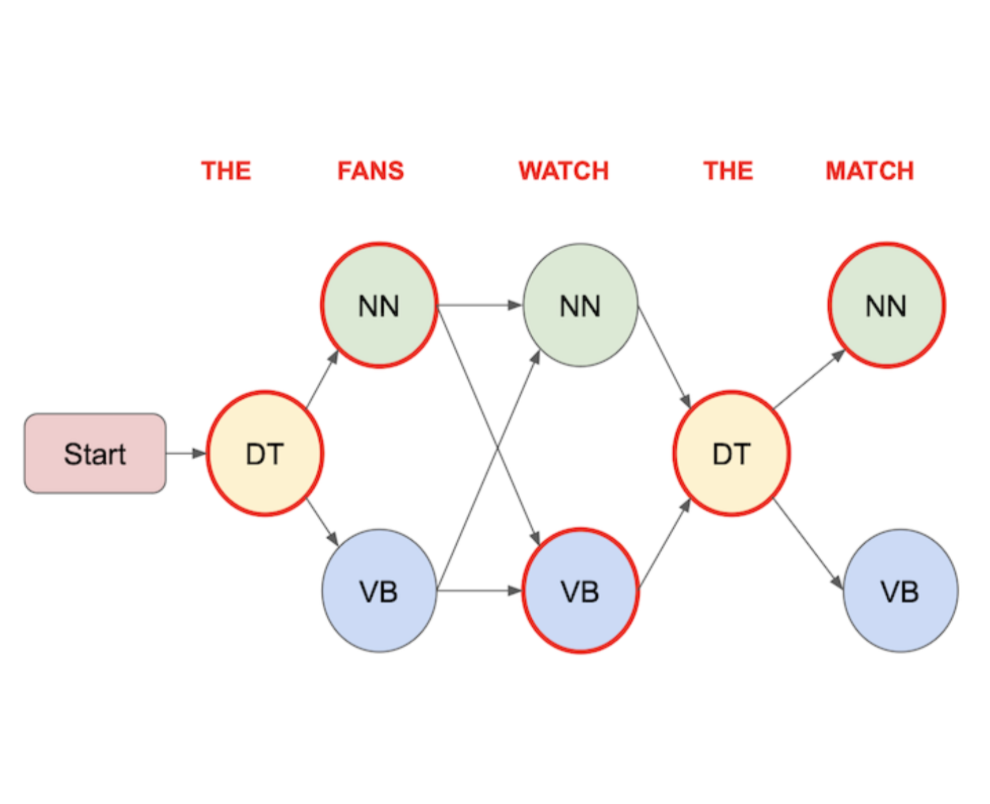

- Implemented a Hidden Markov Model to perform Part of Speech (POS) tagging, leveraging the robustness of the Viterbi algorithm and greedy decoding for efficient and accurate analysis.

- Employed statistical methods to calculate transition and emission probabilities, ensuring a high level of precision in identifying the grammatical parts of speech in text data.

|

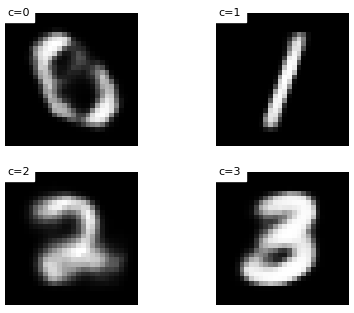

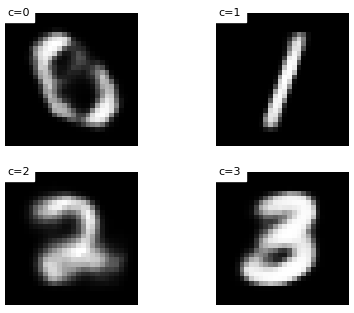

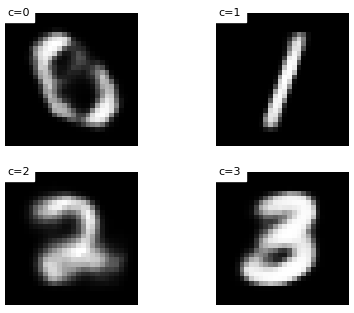

- Developed a comprehensive project on "Variational Autoencoders for Digit Generation," focusing on the implementation and analysis of Variational Auto-Encoders (VAEs) in machine learning. VAEs are renowned for their likelihood maximization and unsupervised representation learning capabilities.

- Executed key tasks including setting up a PyTorch data loading pipeline, constructing an auto-encoder architecture, extending it to a VAE, tuning parameters, analyzing representations, and enhancing generative capabilities using the MNIST dataset of handwritten digits.

|

- Implemented a Generative Adversarial Network (GAN) based on "Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks", utilizing the CIFAR-10 dataset for training, and developed both discriminator and generator components.

- Conducted a comprehensive training process with a robust loop, loss computation, and parameter updates, accompanied by activation maximization technique for visualization.

- Combined practical coding of advanced neural network architectures with theoretical analysis, resulting in a deepened understanding of GANs, all documented in the repository for reference.

|

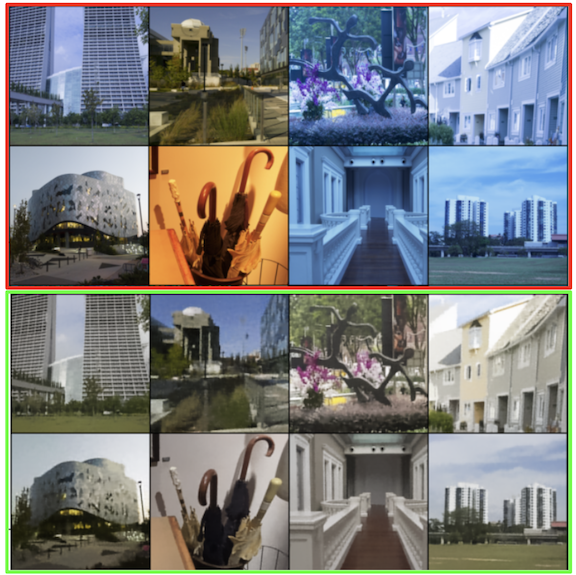

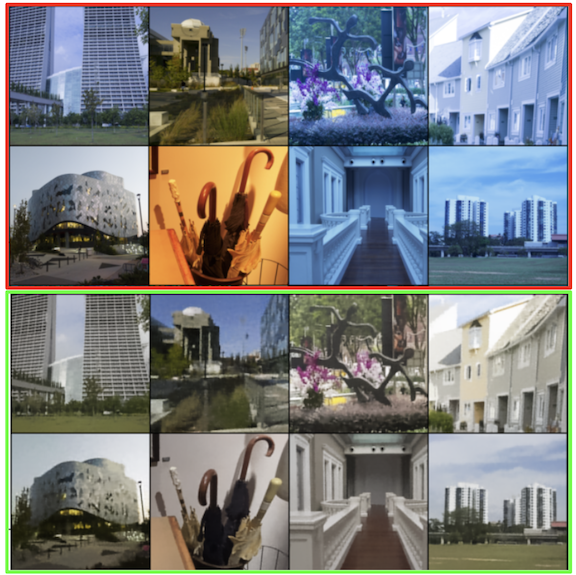

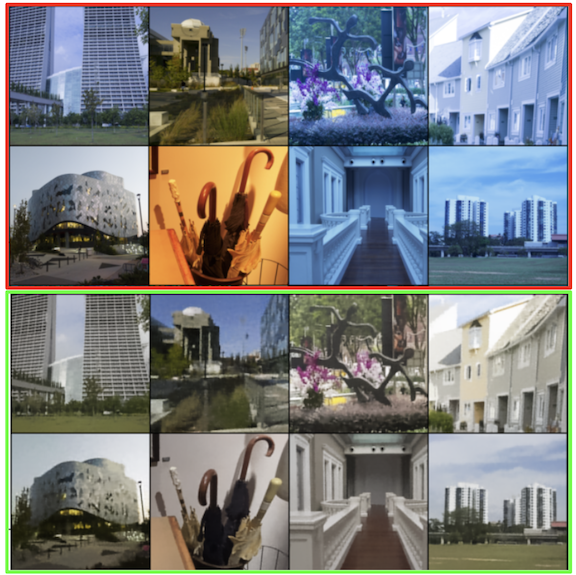

- Implemented a Variational Autoencoder Model for white balance correction in images, focusing on neutralizing color casts caused by different lighting conditions to achieve natural and accurate colors.

- Utilized the Adobe White-Balanced Images Dataset, which includes 2,881 rendered images from various camera models, including mobile phones and a DSLR camera, for testing and experiments.

- Developed an encoder with convolutional layers and ReLU activation, a reparameterization technique for sampling from the latent space, and a decoder with linear and transposed convolutional layers, complemented by a loss function combining reconstruction loss and Kullback-Leibler (KL) Divergence loss.

|

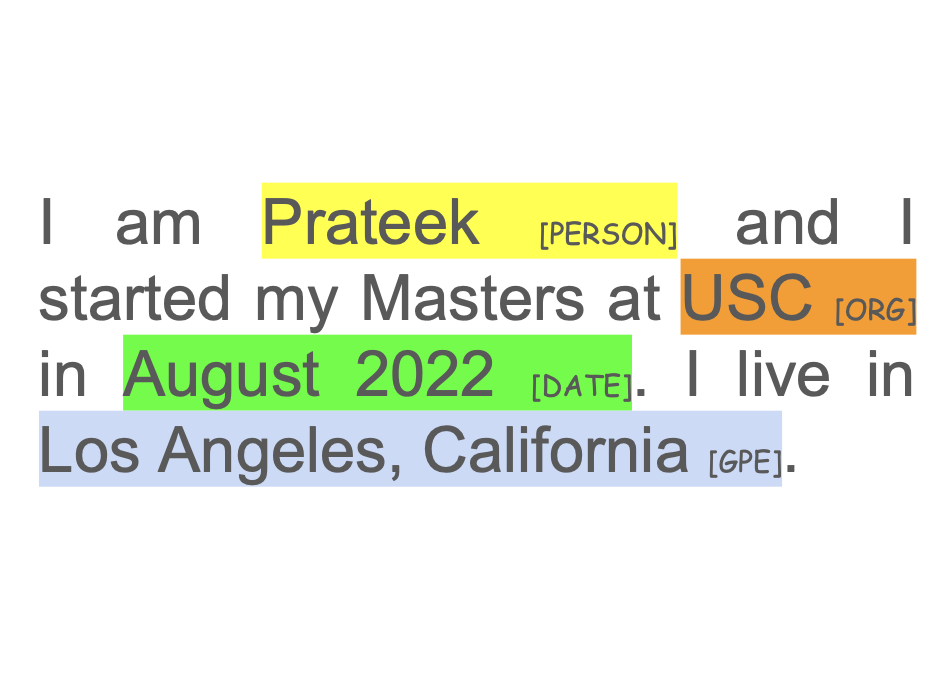

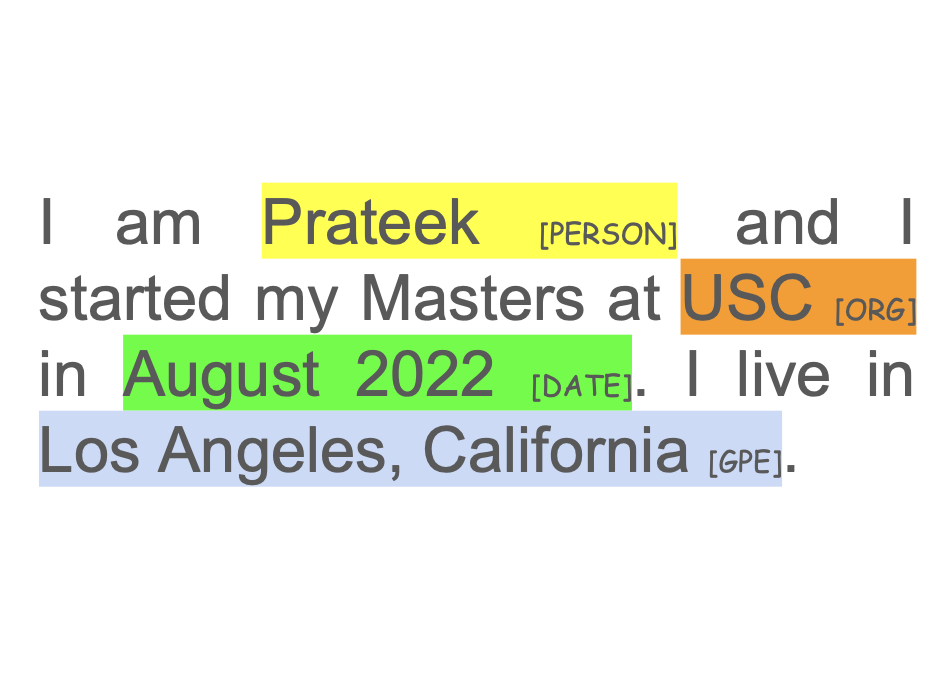

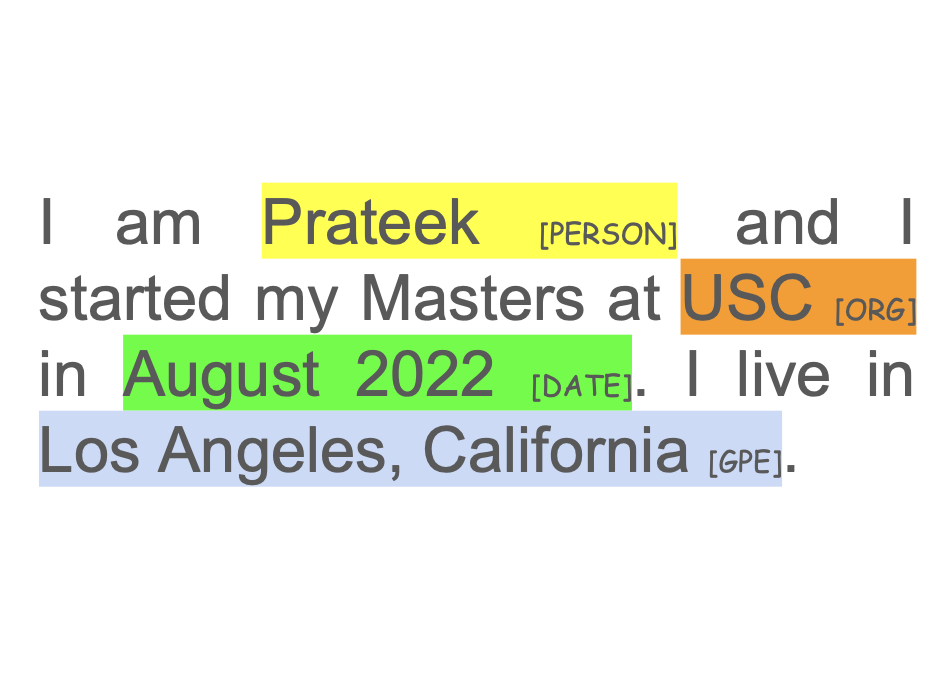

- Implemented a Bidirectional LSTM (BLSTM) for Named Entity Recognition (NER) using GloVe word embeddings. Initialized neural network embeddings with GloVe vectors, addressing the case-insensitivity of GloVe by adding a boolean mask for case-sensitive NER recognition.

- Developed model architecture with components like Embedding, LSTM, Linear, Dropout, and ELU layers, and trained using hyperparameters such as a batch size of 64, SGD optimizer, and a learning rate of 0.5 over 50 epochs, achieving an accuracy of 98.43%.

|